About Brain State Decoding in Brain-Computer Interfaces

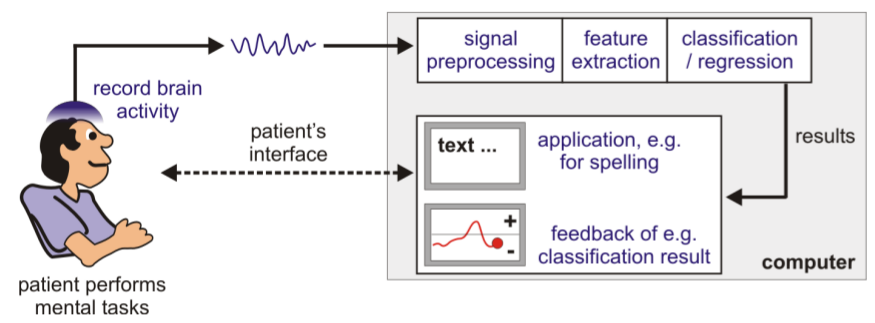

Figure 1: Diagram of a typical BCI system

Figure 1: Diagram of a typical BCI system

In general, brain-computer interface (BCI) systems make use of state-of-the-art machine learning methods, to decode ongoing brain signals in real-time. Via BCIs, users shall be enabled to type text, control a computer or wheelchair - even if they are severely motor impaired. To perform such a task, a BCI is realized using several components (as shown in Figure 1):

- Brain activity measurement: EEG, ECoG, MRI, PET, NIRS, etc

- Signal preprocessing: band-pass filtering, outlier removal, artifact correction, normalization, etc

- Feature extraction: gain relevant information from acquired data, e.g. the band power of a neural oscillatory source of interest or the average amplitude after a stimulus (event-related potential)

- Classification: determine which brain state the recorded signals correspond to (decode the intended action of the subject); decide what the user tried to accomplish by applying machine learning methods

- Application/feedback: the classification result triggers a specific event, e.g. a specific letter appears on the screen, the wheelchair is moved in a certain direction or the user receives feedback about how well he performed in a certain training task

Applications

Here some examples of what can be done with a BCI system:

- Communication / text entry

- Control of a chess game

- Brain painting application

- Mind controlled pinball machine

- Mind controlled World of Warcraft

- Mind controlled robotic arm

External resources

Want to know more?

- BBCI: BCI lab at the TU Berlin

- Graz BCI: BCI lab at the TU Graz

- Webpage of Fabien Lotte: List of BCI-related conferences / special issues